Overview

- Effectively translating business requirements to a data-driven solution is key to the success of your data science project

- Hear from a data science leader on his experience and thoughts on how to bridge this gap

Introduction

How effectively can you convert a business problem into a data problem?

This question holds the key to unlocking the potential of your data science project. There is no one-size-fits-all approach here. This is a nontrivial effort with positive long-term results and hence deserves a great deal of focused collaboration across the product team, the data science team, and the engineering team.

Every leader knows that being able to measure progress is an invaluable aspect of any project. This understanding goes to an entirely different level when it comes to data science projects.

We discussed how to manage the different stakeholders in data science in my previous article (recap below). In this article, we are going to discuss the journey of translating the broad qualitative business requirements into tangible quantitative data-driven solutions.

One of the most tangible advantages of this approach, among many others, is that it establishes a common understanding of what ‘success’ means and how we can measure it. It also lays a framework for how progress will be tracked and communicated among the various internal and external stakeholders.

This is the second article of a four-article series that discusses my learnings from developing data-driven products from scratch and deploying them in real-world environments where their performance influences the client’s business/financial decisions. You can read articles one and three here:

- Article #1: A Data Science Leader’s Guide to Managing Stakeholders

- Article #3: 4 Key Aspects of a Data Science Project Every Data Scientist and Leader Should Know

Table of Contents

- Quick Recap of Managing Different Data Science Stakeholders (Article #1)

- Bridging the Qualitative-to-Quantitative Gap in Data Science

- Is the Right Data Available with the Right Level of Granularity?

- Are We Asking the Right Questions?

- Repeatability and Reproducibility: Consistency in Labeled Data for Accurate AI Systems

- Active Learning for Efficient and More Accurate AI Systems

- Diverse Team Composition is Critical for Success

Quick Recap of Managing Different Data Science Stakeholders (Article #1)

Let me quickly recap what we covered in the first article of this series. It’s important to have this background before reading further as it is essentially the base on which this article will revolve.

We discussed the three key stakeholders in a data-driven product ecosystem and how the data-science-delivery leader has to align them with each other. The three main stakeholders are:

- The customer-facing team: This team is tasked with the dual responsibility of ensuring that the internal teams act on customers’ feedback/concerns in a timely manner and also of gauging the customers’ unmet needs. When it comes to data-driven products, the customer-facing team, and through them the customers, have to be educated on the ‘illusion of 100% accuracy’ and ‘continuous improvement process’ which are unique to these data-driven products

- The executive team: It is critical to get the executive team’s buy-in on the unique development, deployment and maintenance cycles of data-driven products. It is also important to help the executives distinguish between the low-stakes ‘consumer-AI’ image that the popular discourse has created versus the high-stakes ground reality of ‘enterprise-AI’ that the corporates will commonly face

- The data science team: The pace at which the data science field is evolving, there is always something new (and potentially fancier) to learn. While the core data science team may be tempted to periodically apply the newer technologies, the data science delivery leader has to regularly remind the data science team that the AI is only a part of the whole puzzle and that the ‘appropriateness’ of the technology matters more than its ‘coolness’

With that background, let’s dive into this article!

Bridging the Qualitative-to-Quantitative Gap in Data Science

- During a regular weekday lunch, as you are discussing how everybody’s weekend was, one of your colleagues mentions she watched a particular movie that you have also been wanting to watch. To know her feedback on the movie, you ask her – “Hey, was the movie’s direction up to the mark?”

- You bump into a colleague in the hallway who you haven’t seen for a couple of weeks. She mentions she just returned from a popular international destination vacation. To know more about the destination, you ask her – “Wow! Is it really as exotic as they show in the magazines?”

- Your roommate got a new video game that he has been playing nonstop for a few hours. When he takes a break, you ask him – “Is the game really that cool?”

Did you find any of these questions ‘artificial’? Do re-read the scenarios and take a few seconds to think through. Most of us would find these questions to be perfectly natural!

What would certainly be artificial though is asking questions like:

- ‘Hey, was the movie direction 3.5-out-of-5?’, or

- ‘Is the vacation destination 8 on a scale of 1-to-10?’, or

- ‘Is the video game in the top 10 percentile of all the video games?’

In most scenarios, we express our asks in qualitative terms. This is true about business requirements as well.

Isn’t it more likely that the initial client ask will be “Build us a landing page which is aesthetically pleasing yet informative” versus “we need a landing page which is rated at least 8.5-out-of-10 by 1000 random visitors to our website on visual-appeal, navigability and product-information parameters”?

On the other hand, systems are built and evaluated based on exact quantitative requirements. For example, the database query has to return in less than 30 milliseconds, the website has to fully load in less than 3 milliseconds on a typical 10mbps connection, and so on.

This gap between qualitative business requirements and quantitative machine requirements is exacerbated when it comes to data-driven products.

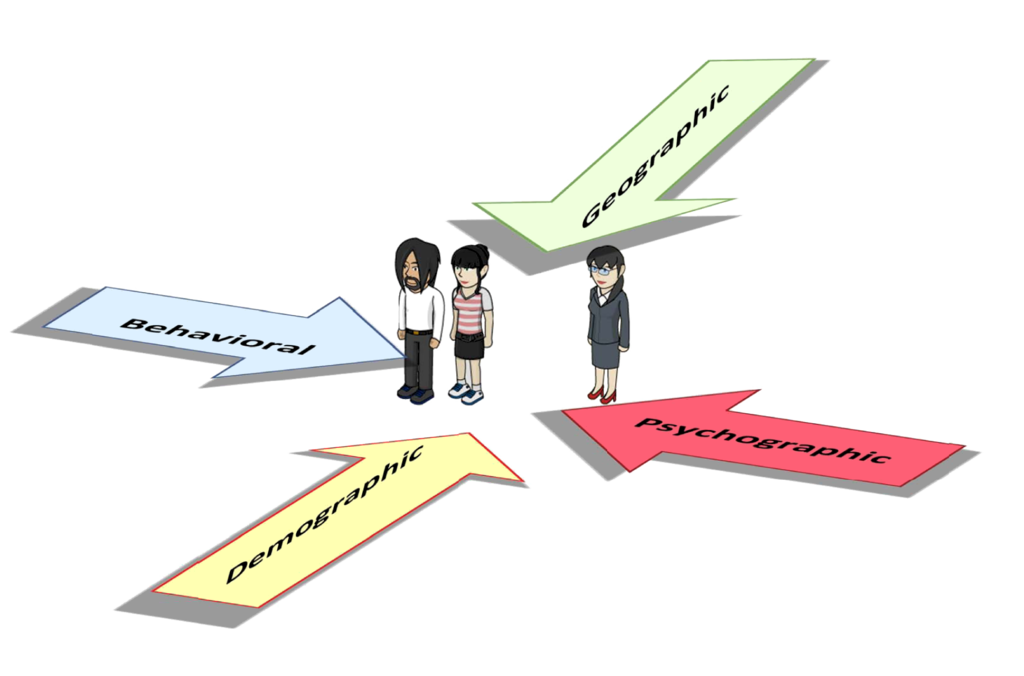

A typical business requirement for a data-driven product could be “develop an optimal digital marketing strategy to reach the likely target customer population”. Converting this to a quantifiable requirement has several non-trivial challenges. Some of these are:

- How we define ‘optimal’: Do we focus more on precision or more on recall? Do we focus more on accuracy (is the approached customer segment really our target customer segment or not)? Or do we focus more on efficiency (how quickly do we make a go/no-go decision once the customer segment is exposed to our algorithm)?

- How do we actually evaluate if we have met the optimal criteria? And if not, how much of a gap exists?

To define customers ‘similar’ to our target population, we need to agree on a set of N dimensions that will be used for computing this similarity:

- Patterns in the browsing history

- Patterns in e-shopping

- Patterns in user-provided meta-data, and so on. Or do we need to device a few other dimensions?

After that, we need to critically evaluate whether all the relevant data exists in an accessible format. If not, are there ways to infer at least parts of it?

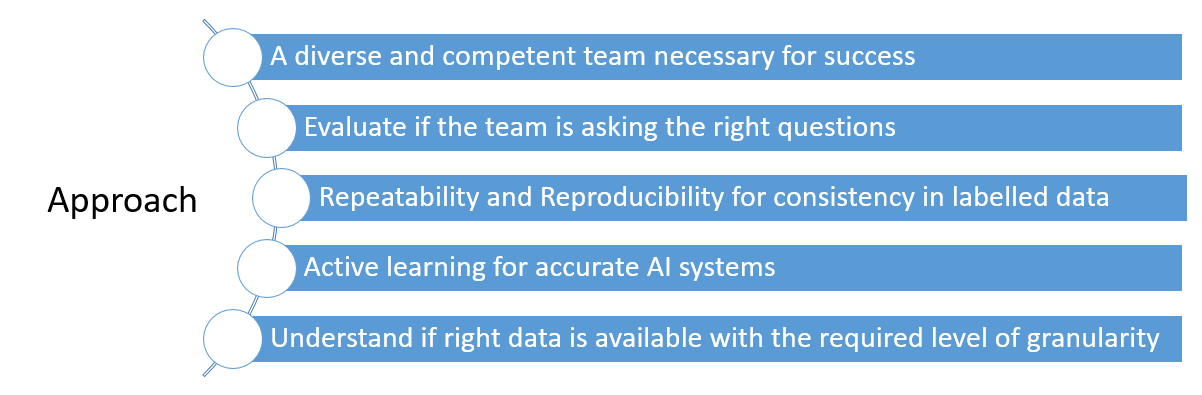

Is the Right Data Available with the Right Level of Granularity?

Consider a business scenario where a company has a chatbot that handles customer queries automatically. When the chatbot fails to resolve a customer query, the call is transferred to a human expert.

It is fair to assume that the cost of a human expert manning a call center is higher than an automated chatbot resolving the customer query. Thus, the business problem can be stated as: Reduce the proportion of calls that reach a human expert.

The first barrier to cross is often the HiPPO Effect.

Simply put, the HiPPO (Highest Paid Person’s Opinion) effect states that the authority figure’s suggestions are interpreted as the final truth, and promptly implemented, even if the findings from the data are contrary.

For instance, in the above example, the HiPPO might be that calls are getting diverted to human experts due to time-out issues related to network connectivity within the chatbot’s workflow. A more prudent data-driven approach would be to list out all the possible reasons leading to call diversions, one of them being the connectivity issue.

Such a list can be derived from a combination of expert knowledge and some initial data log analysis. This step falls under, what we call, the ‘data-discovery’ phase.

The data-discovery phase, which is essentially an iterative process, systematizes the use of insights from the data to guide the expert’s intuition and to identify the next dimension of data to investigate.

The data-discovery phase also identifies if there are any gaps in the ‘ideal-data-needed’ vs. ‘actual-data-available’. For example, we may identify that the last interaction between the chatbot and the customer is not being stored in the database. This lack of data needs to be solved promptly by changing the data storage schema.

Source: Yseop

Let’s assume that this analysis of possible failure scenarios led to the following findings:

- The chatbot did not understand the intent

- The chatbot is not able to establish a connection with the knowledge base

- The chatbot is not able to retrieve relevant information from the knowledge base before the time-out

- There is no relevant information in the knowledge-base, or

- Unknown/non-replicable issues

Armed with this information, the next step would be to dig deeper. For example:

- Is the intent not understood because the speech-to-text component failed or the text-to-intent mining component misfired?

- Is the time-out occurring because the information is not stored in the right format (e.g., suboptimal inverted index)? or

- Is the information not easily accessible (e.g., on an LRU cache vs in a network-call setup like ElasticSearch)? and so on

The findings from this step will help rank the problems in terms of their prevalence and also identify systemic issues. If the failure of the speech-to-text component is one of the prevalent problems, the speech-to-text vendor needs to be approached to identify if the speech inputs are not being captured/transferred as per the norms/best-practices or if the speech-to-text system needs more context for better predictions.

Are We Asking the Right Questions?

Moving further along in this journey, translating qualitative data specific questions into quantitative model training strategies is also a nuanced topic, one that can have far-reaching consequences.

Continuing the conversation on speech-to-text issues, it may seem prudent to answer ‘who is the caller?‘. At the surface level, it may seem synonymous to ‘is the caller Miss Y?‘. But these two questions lead to totally different Machine Learning (ML) models.

The ‘who is the caller?‘ question leads to an N-class classification problem (where N is the number of possible callers), whereas ‘is the caller Miss Y?‘ leads to N binary-classifiers!

While all of this may seem complex and data science-led, we cannot underestimate the role of the domain expert. While all errors are mathematically equal, some errors can be more damaging to the company’s finances and reputation than others.

Domain experts play a critical role in understanding the impact of these errors. Domain experts also help layout the best practices in the industry, understand customer expectations and adhere to regulatory requirements.

For example, even if the chatbot is 100% confident that the user has asked for a renewal of a relatively inexpensive service, the call may need to be routed to a human for regulatory compliance purposes depending on the nature of the service.

Repeatability and Reproducibility: Consistency in Labeled Data for Accurate AI Systems

One of the final steps is to have a relevant subset of data labeled by human experts in a consistent manner.

At the vast scale of Big Data, we are talking about obtaining labels for hundreds of thousands of samples. This will need a huge team of human experts to provide the labels.

A more efficient way would be to sample the data in such a manner that only the most diverse set of samples are sent for labeling. One of the best ways to do this is to use stratified sampling. Domain experts will need to analyze which data dimensions get used for the stratification.

Consistency in human labels is trickier than it may seem at first. If the existing automated techniques for label generation are 100% accurate, then there is no need for training any newer machine learning algorithms. And hence, there is no need for human-labeled training samples (e.g., we do not need manual transcription of speech if speech-to-text systems are 100% accurate).

At the same time, if there is no subjectivity in human labeling, then it is just a matter of tabulating the list of steps that the human expert has followed and automating those steps. Almost all practical machine learning systems need training because they are not able to adequately capture the various nuances that humans apply in coming to a particular decision.

Thus, there will be a certain level of inherent subjectivity in the human labels that can’t be done away with.

The goal, however, should be to design label-capturing systems that minimize avenues for ‘extraneous’ subjectivity.

For example, if we are training a machine learning system to predict emotion from speech, the human labels will be generated by playing the speech signals and asking the human labeler to provide the predominant emotion.

One way to minimize extraneous subjectivity is to provide a drop-down of the possible emotion label options instead of letting the human labeler enter his/her inputs in a free flow text format. Similarly, even before the first sample gets labeled, there should be a normalization exercise among the human experts where they agree on the interpretation of each label (e.g., what is the difference between ‘sad’ and ‘angry’).

An objective way to check the subjectivity is ‘repeatability and reproducibility (R&R)’. Repeatability measures the impact of temporal context on human decisions. It is computed as follows:

- The same human expert is asked to label the same data sample at two different times

- The proportion of the times the expert agrees with themselves is called repeatability

Reproducibility measures how consistently the labels can be replicated across experts. It is computed as follows:

- Two or more human experts are asked to label the same data samples in the same setting

- The proportion of the times the experts agree among themselves is called reproducibility

Conducting R&R evaluations on even a small scale of data can help identify process improvements as well as help gauge the complexity of the problem.

Active Learning for Efficient and More Accurate AI Systems

Machine learning is typically ‘passive’. This means that the machine doesn’t proactively ask for human labels on samples where it is most confusing. Instead, the machines are trained on labeled samples that are fed to the training algorithms.

A relatively new branch of machine learning called Active Learning tries to address this. It does so by:

- First training a relatively simple model with limited human labels, and then

- Proactively highlighting only those samples where the model’s prediction confidence is below a certain threshold

The human labels are sought on priority for such ‘confusing samples’.

Diverse Data Science Team Composition is Critical for Success

For all the pieces to come together, we need an “all-rounder” data science team:

- It is absolutely critical that the data science team has a healthy mix of data scientists who are trained to think in a data-driven manner. They should also be able to connect the problem-at-hand with established machine learning frameworks

- The team needs Big Data engineers who have expertise in data pipelining and automation. They should also understand, among other things, the various design factors that contribute to latency

- The team also needs domain experts. They can truly guide the rest of the members and the machine to interpret the data in ways consistent with the end customer’s needs

End Notes

We covered quite a lot of ground here. We discussed the nuances of translating a qualitative business requirement into tangible quantitative business requirements.

Reach out to me in the comments section below if you have any questions. I would love to hear your experience on this topic.

In the third article of this series, we will discuss various deployment aspects as the data-driven product gets ready for real-world deployment. So watch this space!