This article was published as a part of the Data Science Blogathon

Introduction to BERT:

BERT stands for Bidirectional Encoder Representations from Transformers. It was introduced in 2018 by Google Researchers. BERT achieved state-of-art performance in most of the NLP tasks at that time and drawn the attention of the data science community worldwide.

It is extensively used today by data science practitioners for various NLP tasks. Details about the working of the BERT model can be found here.

Introduction to Natural Language Inference:

Natural Language Inference is a task in NLP where we are given two sentences namely premise and hypothesis. We have to predict whether the hypothesis given is True, False or not related with respect to Premise. We call it entailment for True, contradiction for False and neutral for undetermined or not related. Also, We can understand it with the following examples:

- Entailment: A person is riding a horse & A person is outdoor on a horse.

- Contradiction: A person is wearing blue jeans & a person is wearing black jeans.

- Neutral: Person is riding bicycle & Person is training his horse.

In this article, we are going to use BERT for Natural Language Inference (NLI) task using Pytorch in Python. The working principle of BERT is based on pretraining using unsupervised data and then fine-tuning the pre-trained weight on task-specific supervised data. BERT is based on deep bidirectional representation and is difficult to pre-train, takes lots of time and requires huge computational resources.

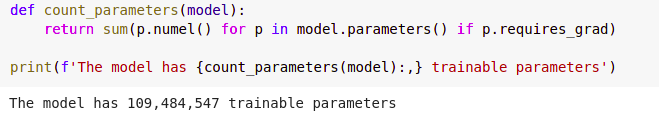

Two model sizes are available for BERT where BERT-base has around 110M parameters and BERT-large has 340M parameters. Pre-trained weights can be easily downloaded using the transformers library. Here, we are going to download these pre-trained weights and then we will fine-tune these weights for the NLI task using the SNLI dataset.

Let’s start with implementation and we can get further details in their specific sections.

Setting up of training environment

We are going to use GPU for training our model (Code will run without GPU too but that will take lots of time). We will use NVIDIA’s open-source “apex.amp” tool for automatic mixed-precision training.

This feature enables automatic conversion of certain GPU operations from precision to mixed-precision, thus improving performance while maintaining accuracy. Comment out if this is already installed in your system.

!git clone https://github.com/NVIDIA/apex cd apex !pip install -v --disable-pip-version-check --no-cache-dir --global-option="--cpp_ext" --global-option="--cuda_ext" ./ cd ..

Let’s begin our BERT implementation

Let’s start with importing torch and setting seed value.

import torch SEED = 1111 torch.manual_seed(SEED) torch.backends.cudnn.deterministic = True

We are going to use a pre-trained BERTbase model for our task. This model has been trained using specific vocabulary. We need to use the same vocabulary and token-index mapping here also in order to let the model understand our inputs.

The vocabulary, as well as token-index mapping, can be downloaded using BertTokenizer from the transformers library. BERT uses WordPiece vocabulary with a vocab size of around 30,000.

from transformers import BertTokenizer

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

len(tokenizer)

Look at an example to see the working of the BERT tokenizer.

tokens = tokenizer.tokenize('Heyy There!! See some boys are playing in rain')

print(tokens)

output:

[‘hey’, ‘##y’, ‘there’, ‘!’, ‘!’, ‘see’, ‘some’, ‘boys’, ‘are’, ‘playing’, ‘in’, ‘rain’]

Tokens can be easily converted to index using a BERT tokenizer.

indexes = tokenizer.convert_tokens_to_ids(tokens) print(indexes)

output:

[4931, 2100, 2045, 999, 999, 2156, 2070, 3337, 2024, 2652, 1999, 4542]

We also need to give input to the BERT in the same format in which BERT has been pre-trained. BERT uses two special tokens denoted as [CLS] and [SEP]. [CLS] token is used as the first token in the input sequence and [SEP] denotes the end of a sentence.

BERT takes all the inputs as a single sequence. If we need to provide more than one input as in our case where our input will be premise and hypothesis, [SEP] token helps the model to understand input properly. Let’s understand the input format with the help of an example.

- Premise: Man is wearing blue jeans.

- Hypothesis: Man is wearing red jeans.

- Input Format: [CLS] Man is wearing blue jeans. [SEP] Man is wearing red jeans. [SEP]

So, here our input to the model will be in the format given above. The pad as well as the unknown token should also match with the BERT tokenizer. We can get these tokens as well as the index corresponding to these tokens from the tokenizer.

cls_token = tokenizer.cls_token sep_token = tokenizer.sep_token pad_token = tokenizer.pad_token unk_token = tokenizer.unk_token print(cls_token, sep_token, pad_token, unk_token)

Output:

[CLS] [SEP] [PAD] [UNK]

cls_token_idx = tokenizer.cls_token_id sep_token_idx = tokenizer.sep_token_id pad_token_idx = tokenizer.pad_token_id unk_token_idx = tokenizer.unk_token_id print(cls_token_idx, sep_token_idx, pad_token_idx, unk_token_idx)

Output:

101 102 0 100

We will now define some functions for tokenizing as well as trimming the sentence if the token length is greater than the maximum defined length. We are restricting the maximum input length to 256 and the maximum length of each sentence at 128.

max_input_length = 256

def tokenize_bert(sentence):

tokens = tokenizer.tokenize(sentence)

return tokens

def split_and_cut(sentence):

tokens = sentence.strip().split(" ")

tokens = tokens[:max_input_length]

return tokens

def trim_sentence(sent):

try:

sent = sent.split()

sent = sent[:128]

return " ".join(sent)

except:

return sent

Download and Prepare datasets

We are going to use SNLI datasets. The datasets can be downloaded and opened directly using the following command.

!wget https://nlp.stanford.edu/projects/snli/snli_1.0.zip

# https://www.geeksforgeeks.org/working-zip-files-python

from zipfile import ZipFile

# specifying the zip file name

file_name = "snli_1.0.zip"

# opening the zip file in READ mode

with ZipFile(file_name, 'r') as zip:

# printing all the contents of the zip file

zip.printdir()

# extracting all the files

print('Extracting all the files now...')

zip.extractall()

print('Done!')

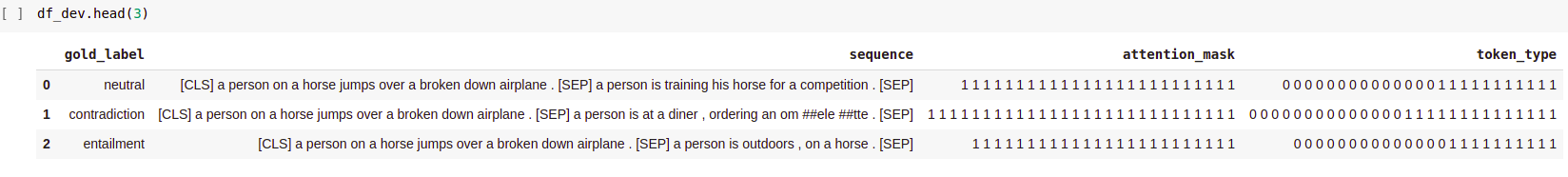

Now, we need to prepare all the inputs for the BERT using our datasets. We need to provide three inputs to the BERT namely tokens index, attention mask and token type ids. Tokens index is our main input containing indexes of the sequence tokens.

Attention mask helps the model to know the useful tokens and padding that is done during batch preparation. Attention mask is basically a sequence of 1’s with the same length as input tokens.

Lastly, Token type ids help the model to know which token belongs to which sentence. For tokens of the first sentence in input, token type ids contain 0 and for second sentence tokens, it contains 1. Let’s understand this with the help of our previous example. Out input tokens will be like this:

- Input tokens: [ ‘[CLS]’, ‘Man’, ‘is’, ‘wearing’, ‘blue’, ‘jeans’, ‘.’, ‘[SEP]’, ‘Man’, ‘is’, ‘wearing’, ‘red’, ‘jeans’, ‘.’, ‘[SEP]’ ]

- Attention_mask: [1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1]

- Token type ids: [0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1]

Define helper functions for data preparation.

#Get list of 0s

def get_sent1_token_type(sent):

try:

return [0]* len(sent)

except:

return []

#Get list of 1s

def get_sent2_token_type(sent):

try:

return [1]* len(sent)

except:

return []

#combine from lists

def combine_seq(seq):

return " ".join(seq)

#combines from lists of int

def combine_mask(mask):

mask = [str(m) for m in mask]

return " ".join(mask)

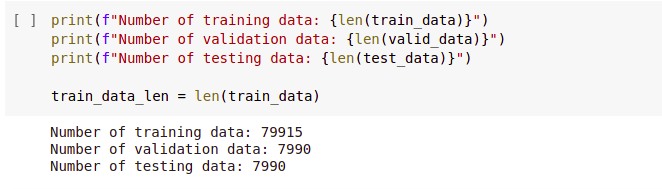

Let’s prepare our input from our data. We are going to use just 80,000 training data and 8000 each for validation and testing. Using whole data would require more training time.

import pandas as pd

#load dataset

df_train = pd.read_csv('snli_1.0/snli_1.0_train.txt', sep='t')

df_dev = pd.read_csv('snli_1.0/snli_1.0_dev.txt', sep='t')

df_test = pd.read_csv('snli_1.0/snli_1.0_test.txt', sep='t')

#Get neccesary columns

df_train = df_train[['gold_label','sentence1','sentence2']]

df_dev = df_dev[['gold_label','sentence1','sentence2']]

df_test = df_test[['gold_label','sentence1','sentence2']]

#Take small dataset

df_train = df_train[:80000]

df_dev = df_train[:8000]

df_test = df_train[:8000]

#Trim each sentence upto maximum length

df_train['sentence1'] = df_train['sentence1'].apply(trim_sentence)

df_train['sentence2'] = df_train['sentence2'].apply(trim_sentence)

df_dev['sentence1'] = df_dev['sentence1'].apply(trim_sentence)

df_dev['sentence2'] = df_dev['sentence2'].apply(trim_sentence)

df_test['sentence1'] = df_test['sentence1'].apply(trim_sentence)

df_test['sentence2'] = df_test['sentence2'].apply(trim_sentence)

#Add [CLS] and [SEP] tokens

df_train['sent1'] = '[CLS] ' + df_train['sentence1'] + ' [SEP] '

df_train['sent2'] = df_train['sentence2'] + ' [SEP]'

df_dev['sent1'] = '[CLS] ' + df_dev['sentence1'] + ' [SEP] '

df_dev['sent2'] = df_dev['sentence2'] + ' [SEP]'

df_test['sent1'] = '[CLS] ' + df_test['sentence1'] + ' [SEP] '

df_test['sent2'] = df_test['sentence2'] + ' [SEP]'

#Apply Bert Tokenizer for tokeinizing

df_train['sent1_t'] = df_train['sent1'].apply(tokenize_bert)

df_train['sent2_t'] = df_train['sent2'].apply(tokenize_bert)

df_dev['sent1_t'] = df_dev['sent1'].apply(tokenize_bert)

df_dev['sent2_t'] = df_dev['sent2'].apply(tokenize_bert)

df_test['sent1_t'] = df_test['sent1'].apply(tokenize_bert)

df_test['sent2_t'] = df_test['sent2'].apply(tokenize_bert)

#Get Topen type ids for both sentence

df_train['sent1_token_type'] = df_train['sent1_t'].apply(get_sent1_token_type)

df_train['sent2_token_type'] = df_train['sent2_t'].apply(get_sent2_token_type)

df_dev['sent1_token_type'] = df_dev['sent1_t'].apply(get_sent1_token_type)

df_dev['sent2_token_type'] = df_dev['sent2_t'].apply(get_sent2_token_type)

df_test['sent1_token_type'] = df_test['sent1_t'].apply(get_sent1_token_type)

df_test['sent2_token_type'] = df_test['sent2_t'].apply(get_sent2_token_type)

#Combine both sequences

df_train['sequence'] = df_train['sent1_t'] + df_train['sent2_t']

df_dev['sequence'] = df_dev['sent1_t'] + df_dev['sent2_t']

df_test['sequence'] = df_test['sent1_t'] + df_test['sent2_t']

#Get attention mask

df_train['attention_mask'] = df_train['sequence'].apply(get_sent2_token_type)

df_dev['attention_mask'] = df_dev['sequence'].apply(get_sent2_token_type)

df_test['attention_mask'] = df_test['sequence'].apply(get_sent2_token_type)

#Get combined token type ids for input

df_train['token_type'] = df_train['sent1_token_type'] + df_train['sent2_token_type']

df_dev['token_type'] = df_dev['sent1_token_type'] + df_dev['sent2_token_type']

df_test['token_type'] = df_test['sent1_token_type'] + df_test['sent2_token_type']

#Now make all these inputs as sequential data to be easily fed into torchtext Field.

df_train['sequence'] = df_train['sequence'].apply(combine_seq)

df_dev['sequence'] = df_dev['sequence'].apply(combine_seq)

df_test['sequence'] = df_test['sequence'].apply(combine_seq)

df_train['attention_mask'] = df_train['attention_mask'].apply(combine_mask)

df_dev['attention_mask'] = df_dev['attention_mask'].apply(combine_mask)

df_test['attention_mask'] = df_test['attention_mask'].apply(combine_mask)

df_train['token_type'] = df_train['token_type'].apply(combine_mask)

df_dev['token_type'] = df_dev['token_type'].apply(combine_mask)

df_test['token_type'] = df_test['token_type'].apply(combine_mask)

df_train = df_train[['gold_label', 'sequence', 'attention_mask', 'token_type']]

df_dev = df_dev[['gold_label', 'sequence', 'attention_mask', 'token_type']]

df_test = df_test[['gold_label', 'sequence', 'attention_mask', 'token_type']

df_train = df_train.loc[df_train['gold_label'].isin(['entailment','contradiction','neutral'])]

df_dev = df_dev.loc[df_dev['gold_label'].isin(['entailment','contradiction','neutral'])]

df_test = df_test.loc[df_test['gold_label'].isin(['entailment','contradiction','neutral'])

#Save prepared data as csv file

df_train.to_csv('snli_1.0/snli_1.0_train.csv', index=False)

df_dev.to_csv('snli_1.0/snli_1.0_dev.csv', index=False)

df_test.to_csv('snli_1.0/snli_1.0_test.csv', index=False)

# To convert back attention mask and token type ids to integer.

def convert_to_int(tok_ids):

tok_ids = [int(x) for x in tok_ids]

return tok_ids

We are going to use torchtext Field and TabularDataset for loading our datasets as PyTorch tensor and then we will use BucketIterator for creating batches. Torchtext’s inbuilt features make it very easy to load our dataset for training and testing. We will be using batches of batch size 16 as larger batch size will result in GPU memory overflow. Reduce the batch size to 8 if a memory error is taking place.

#For latest version use torchtext.legacy

from torchtext import data

#For sequence

TEXT = data.Field(batch_first = True,

use_vocab = False,

tokenize = split_and_cut,

preprocessing = tokenizer.convert_tokens_to_ids,

pad_token = pad_token_idx,

unk_token = unk_token_idx)

#For label

LABEL = data.LabelField()

#For Attention mask

ATTENTION = data.Field(batch_first = True,

use_vocab = False,

tokenize = split_and_cut,

preprocessing = convert_to_int,

pad_token = pad_token_idx)

#For token type ids

TTYPE = data.Field(batch_first = True,

use_vocab = False,

tokenize = split_and_cut,

preprocessing = convert_to_int,

pad_token = 1)

Fields will help to map the column with the torchtext Field.

fields = [('label', LABEL), ('sequence', TEXT), ('attention_mask', ATTENTION), ('token_type', TTYPE)]

train_data, valid_data, test_data = data.TabularDataset.splits(

path = 'snli_1.0',

train = 'snli_1.0_train.csv',

validation = 'snli_1.0_dev.csv',

test = 'snli_1.0_test.csv',

format = 'csv',

fields = fields,

skip_header = True)

For sequence, we are using BERT vocabulary but for the label, we need to build vocabulary.

LABEL.build_vocab(train_data)

BucketIterator helps in the easy preparation of batches for training.

#Create iterator

BATCH_SIZE = 16

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

train_iterator, valid_iterator, test_iterator = data.BucketIterator.splits(

(train_data, valid_data, test_data),

batch_size = BATCH_SIZE,

sort_key = lambda x: len(x.sequence),

sort_within_batch = False,

device = device)

We will start with downloading pre-trained BERT.

from transformers import BertModel

bert_model = BertModel.from_pretrained('bert-base-uncased')

We are going to use the BERT architecture only with the addition of just one linear layer for the output prediction. The last hidden layer corresponding to [CLS] token will be fed into the output layer of size num_classes that is three here.

import torch.nn as nn

class BERTNLIModel(nn.Module):

def __init__(self,

bert_model,

hidden_dim,

output_dim,

):

super().__init__()

self.bert = bert_model

embedding_dim = bert_model.config.to_dict()['hidden_size']

self.out = nn.Linear(embedding_dim, output_dim)

def forward(self, sequence, attn_mask, token_type):

embedded = self.bert(input_ids = sequence, attention_mask = attn_mask, token_type_ids= token_type)[1]

output = self.out(embedded)

return output

Load model.

#defining model

HIDDEN_DIM = 512

OUTPUT_DIM = len(LABEL.vocab)

model = BERTNLIModel(bert_model,

HIDDEN_DIM,

OUTPUT_DIM,

).to(device)

Our model has almost 110M parameters.

Let’s define the loss function and optimizer for our model.

from transformers import *

import torch.optim as optim

optimizer = AdamW(model.parameters(),lr=2e-5,eps=1e-6,correct_bias=False)

def get_scheduler(optimizer, warmup_steps):

scheduler = get_constant_schedule_with_warmup(optimizer, num_warmup_steps=warmup_steps)

return scheduler

criterion = nn.CrossEntropyLoss().to(device)

mp = True

if mp:

try:

from apex import amp

except ImportError:

raise ImportError("Please install apex from https://www.github.com/nvidia/apex to use fp16 training.")

model, optimizer = amp.initialize(model, optimizer, opt_level='O1')

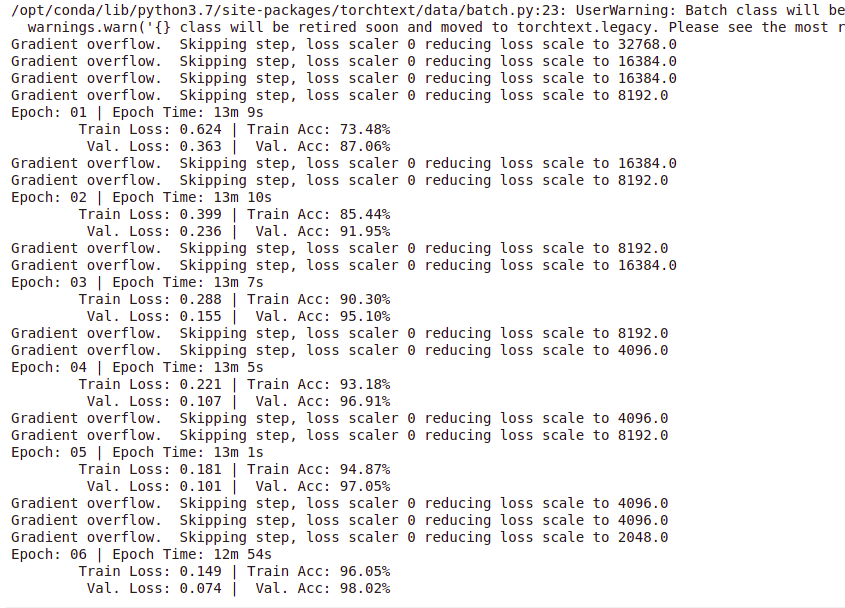

Training of our model

Finally, we will train our model. Train() will be executed for each epoch to train the model and evaluate() will give validation loss and accuracy. Epoch num is set as 6 although we will get good accuracy from the first epoch itself as we are using pre-trained weights.

To calculate accuracy.

def categorical_accuracy(preds, y):

max_preds = preds.argmax(dim = 1, keepdim = True)

correct = (max_preds.squeeze(1)==y).float()

return correct.sum() / len(y)

Define train() function.

max_grad_norm = 1 def train(model, iterator, optimizer, criterion, scheduler): epoch_loss = 0 epoch_acc = 0 model.train() for batch in iterator: optimizer.zero_grad() # clear gradients first torch.cuda.empty_cache() # releases all unoccupied cached memory sequence = batch.sequence attn_mask = batch.attention_mask token_type = batch.token_type label = batch.label predictions = model(sequence, attn_mask, token_type) loss = criterion(predictions, label) acc = categorical_accuracy(predictions, label) if mp: with amp.scale_loss(loss, optimizer) as scaled_loss: scaled_loss.backward() torch.nn.utils.clip_grad_norm_(amp.master_params(optimizer), max_grad_norm) else: loss.backward() optimizer.step() scheduler.step() epoch_loss += loss.item() epoch_acc += acc.item() return epoch_loss / len(iterator), epoch_acc / len(iterator)

Define evaluate() function.

def evaluate(model, iterator, criterion): epoch_loss = 0 epoch_acc = 0 model.eval() with torch.no_grad(): for batch in iterator: sequence = batch.sequence attn_mask = batch.attention_mask token_type = batch.token_type labels = batch.label predictions = model(sequence, attn_mask, token_type) loss = criterion(predictions, labels) acc = categorical_accuracy(predictions, labels) epoch_loss += loss.item() epoch_acc += acc.item() return epoch_loss / len(iterator), epoch_acc / len(iterator)

To compute epoch time.

import time def epoch_time(start_time, end_time): elapsed_time = end_time - start_time elapsed_mins = int(elapsed_time / 60) elapsed_secs = int(elapsed_time - (elapsed_mins * 60)) return elapsed_mins, elapsed_secs

Training steps.

import math

N_EPOCHS = 6

warmup_percent = 0.2

total_steps = math.ceil(N_EPOCHS*train_data_len*1./BATCH_SIZE)

warmup_steps = int(total_steps*warmup_percent)

scheduler = get_scheduler(optimizer, warmup_steps)

best_valid_loss = float('inf')

for epoch in range(N_EPOCHS)

start_time = time.time()

train_loss, train_acc = train(model, train_iterator, optimizer, criterion, scheduler)

valid_loss, valid_acc = evaluate(model, valid_iterator, criterion)

end_time = time.time()

epoch_mins, epoch_secs = epoch_time(start_time, end_time)

if valid_loss < best_valid_loss:

best_valid_loss = valid_loss

torch.save(model.state_dict(), 'bert-nli.pt')

print(f'Epoch: {epoch+1:02} | Epoch Time: {epoch_mins}m {epoch_secs}s')

print(f'tTrain Loss: {train_loss:.3f} | Train Acc: {train_acc*100:.2f}%')

print(f't Val. Loss: {valid_loss:.3f} | Val. Acc: {valid_acc*100:.2f}%')

Load best model weights and evaluate on test data.

model.load_state_dict(torch.load('bert-nli.pt'))

test_loss, test_acc = evaluate(model, test_iterator, criterion)

print(f'Test Loss: {test_loss:.3f} | Test Acc: {test_acc*100:.2f}%')

Output: Test Loss: 0.074 | Test Acc: 98.02%

Model Performance

Let’s see the performance of the model on our custom input.

def predict_inference(premise, hypothesis, model, device):

model.eval()

premise = '[CLS] ' + premise + ' [SEP]'

hypothesis = hypothesis + ' [SEP]'

prem_t = tokenize_bert(premise)

hypo_t = tokenize_bert(hypothesis)

prem_type = get_sent1_token_type(prem_t)

hypo_type = get_sent2_token_type(hypo_t)

indexes = prem_t + hypo_t

indexes = tokenizer.convert_tokens_to_ids(indexes)

indexes_type = prem_type + hypo_type

attn_mask = get_sent2_token_type(indexe

indexes = torch.LongTensor(indexes).unsqueeze(0).to(device)

indexes_type = torch.LongTensor(indexes_type).unsqueeze(0).to(device)

attn_mask = torch.LongTensor(attn_mask).unsqueeze(0).to(device)

prediction = model(indexes, attn_mask, indexes_type)

prediction = prediction.argmax(dim=-1).item()

return LABEL.vocab.itos[prediction]

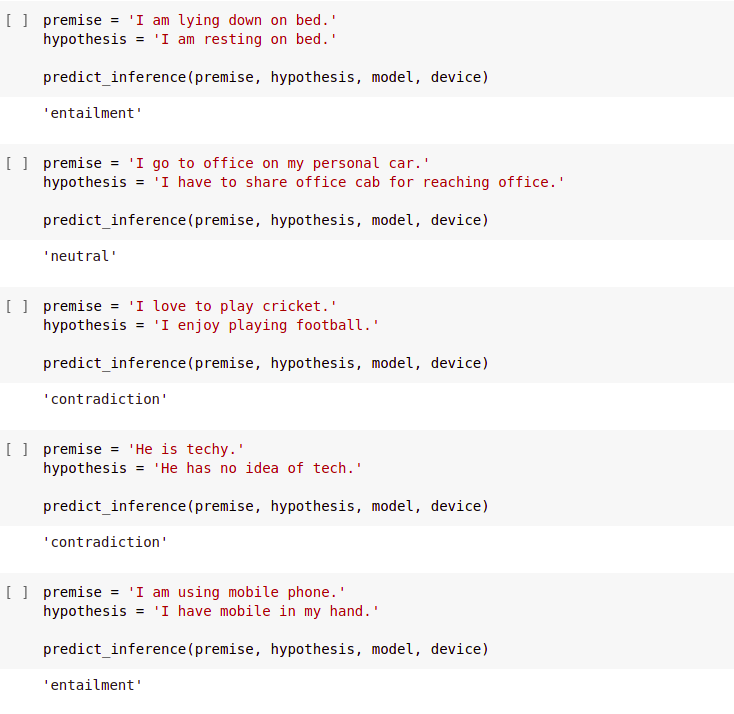

Custom Examples:

premise = 'a man sitting on a green bench.' hypothesis = 'a woman sitting on a green bench.' predict_inference(premise, hypothesis, model, device)

Output: ‘contradiction’

From the custom examples, we can see that our model is able to correctly predict outcome in most cases.

Get the full Jupyter notebook from here.

Reference:

- https://github.com/NVIDIA/apex

- https://github.com/bentrevett/pytorch-sentiment-analysis/blob/master/6%20-%20Transformers%20for%20Sentiment%20Analysis.ipynb

About the Author:

I am Raman Kumar, currently in the final year of my college. I am enthusiastic about deep learning especially Natural Language Processing. You can find me on LinkedIn.

The media shown in this article are not owned by Analytics Vidhya and is used at the Author’s discretion.