Introduction

If you are a Data Scientist or MLOps Engineer, at some point, you would have faced problems tracking code, data, and models for different versions of the same task while collaborating with fellow members. To reduce the complexity revolving around MLOps, ClearML, an end-to-end MLOps platform, can be utilized for these purposes, facilitating easy code development, orchestrating ML workflow, and automating deploying ML models to production. This platform reduces workflow overhead by making them reproducible and scalable. Let us begin with the tutorial on MNIST Digit Classification Using ClearML.

Learning Objectives

With this article, you will learn the following:

- Key Features of ClearML

- Step-by-step tutorial on how to install ClearML

- Step-by-step tutorial on MNIST digit classification using ClearML

- Tasks that can be performed using ClearML and jupyter notebook

This article was published as a part of the Data Science Blogathon.

Table of Contents

Key Features

- Orchestrate: It helps in provisioning code, data, and environment in our existing infrastructure for easy orchestration.

- Automate: ClearML makes shipping ML to production easy with its auto-scaling workflow feature. It also has the capability to launch any workflow locally or in its cloud platform.

- Experiment Management: It enables you to monitor, organise, and compare all of your experiments in an one spot. This allows you to conveniently track hyperparameters, code versions, and experiment findings to make informed decisions.

- Model Management: It lets you easily register, version, and deploy your ML models. You can use it for packaging your models, collaborating with your team members, and deploying your models to production.

- Automatic Logging: It automatically logs all your experiment results, including metrics, graphs, and output files. You can easily visualize your results in a dashboard, share them with others, and compare them to previous experiments.

- Distributed Training: It allows for distributed training over several machines or GPUs. It allows you to scale your trials and shorten your training time easily.

- Integration: It integrates with various prominent machine learning frameworks, including TensorFlow PyTorch, and scikit-learn. It also interfaces with other tools like TensorFlow and Git.

- Collaboration: It allows you to easily collaborate with team members, share experiments and models, and follow the status of your projects. It provides you with role-based access control, collaborative tools, and automated notifications.

- Cloud Deployment: It supports deploying your models to popular cloud platforms such as AWS, Azure, and GCP. It also provides pre-built integrations with popular deployment tools like Kubernetes and Docker.

ClearML Tutorial on MNIST Classification Task

We will go through a step-by-step tutorial on MNIST digit classification using ClearML to help you understand the various features available on the ClearML platform. We will train the neural network to learn the features using forward propagation and backpropagation. The final output from the neural network will be a vector of 10 scores – one for each handwritten digit image. We will also evaluate our model’s ability to classify the images on the test set. First, you need to sign up on the ClearML platform. You can sign up for free on the ClearML platform.

Installation

You can install the ClearML python package from the PyPI repository using the following command:

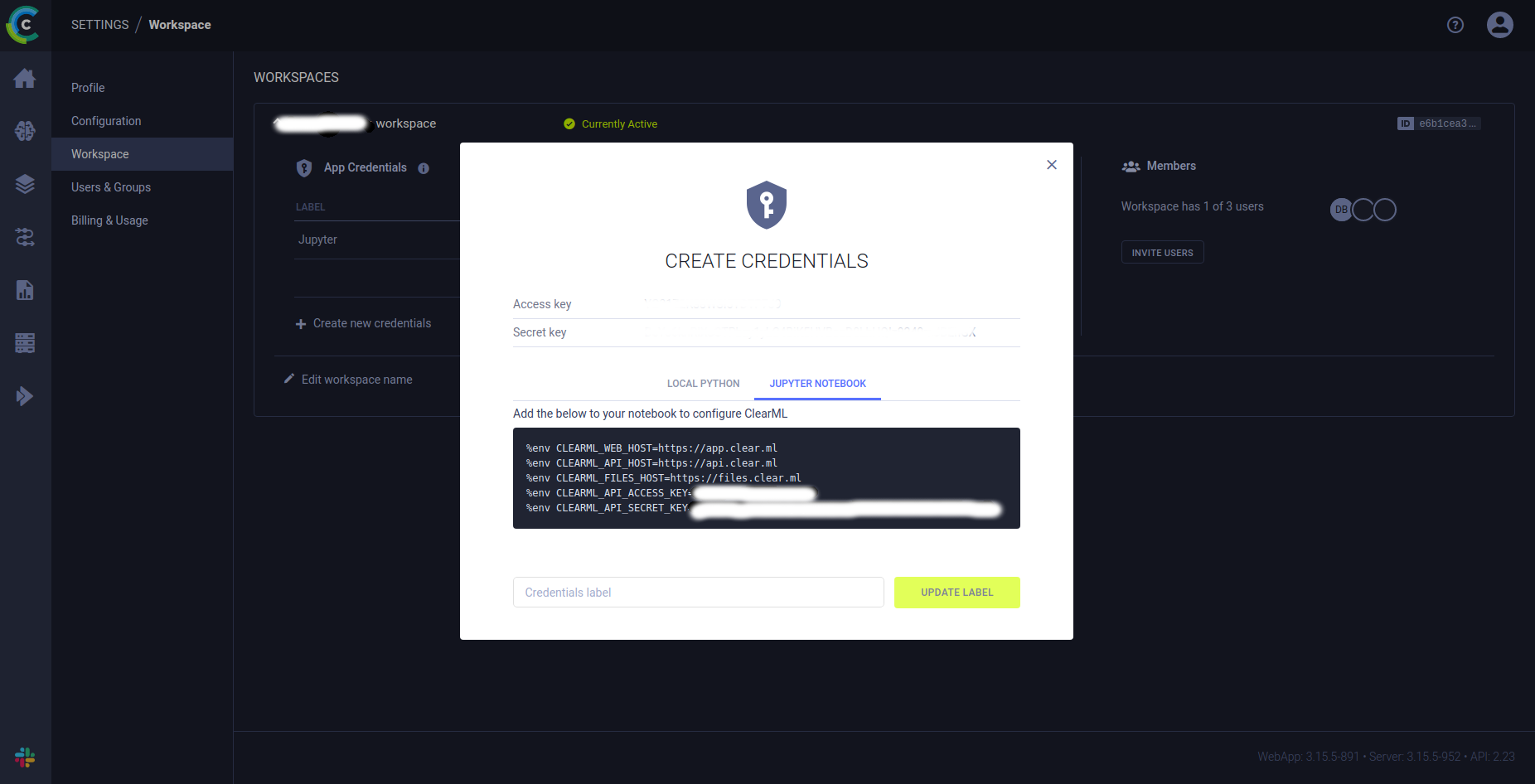

pip install clearmlIn this tutorial, we will be using Jupyter notebook for development purposes. In the ClearML web browser, we generate credentials for connecting ClearML SDK to the server. Open the ClearML Web UI in a browser. On the SETTINGS > WORKSPACE page, click Create new credentials. The JUPYTER NOTEBOOK tab shows the commands required to configure your notebook (a copy to clipboard action is available on hover). Add these commands to your notebook, and we are good to go!

MNIST Classification Using ClearML

You can create a new python virtual environment for this tutorial. You can also download the code from my public GitHub repository from here by using the following command and then installing the necessary dependency packages:

git clone https://github.com/dheerajnbhat/clearml-mnist-tf.git

cd clearml-mnist-tf

pip install -r requirements.txtAfter cloning, go to the folder and open the Jupyter notebook. Then, change the credentials CLEARML_API_ACCESS_KEY and CLEARML_API_SECRET_KEY in the first cell to the credentials that you generated above, and run the rest of the code cells without modification.

First, we add the credentials to the jupyter notebook

%env CLEARML_WEB_HOST=https://app.clear.ml

%env CLEARML_API_HOST=https://api.clear.ml

%env CLEARML_FILES_HOST=https://files.clear.ml

# Jupyter

%env CLEARML_API_ACCESS_KEY='YOUR_CLEARML_API_ACCESS_KEY'

%env CLEARML_API_SECRET_KEY='YOUR_CLEARML_API_SECRET_KEY'We then import the necessary packages for running the task:

import os

import tempfile

import numpy as np

import matplotlib.pyplot as plt

from clearml import Task

from tensorflow.keras.callbacks import ModelCheckpoint, TensorBoard

from tensorflow.keras.datasets import mnist

from tensorflow.keras.layers import Activation, Dense

from tensorflow.keras.models import SequentialNow, we connect our jupyter notebook to the ClearML using the following code:

task = Task.init(

project_name='mnist_digit_classification',

task_name='dev_experiment'

)We initialize the parameters that we will be using to train the model as a dictionary which ClearML will track:

# Set script parameters

task_params = {

'batch_size': 64,

'nb_classes': 10,

'nb_epoch': 6,

'hidden_dim': 512,

}

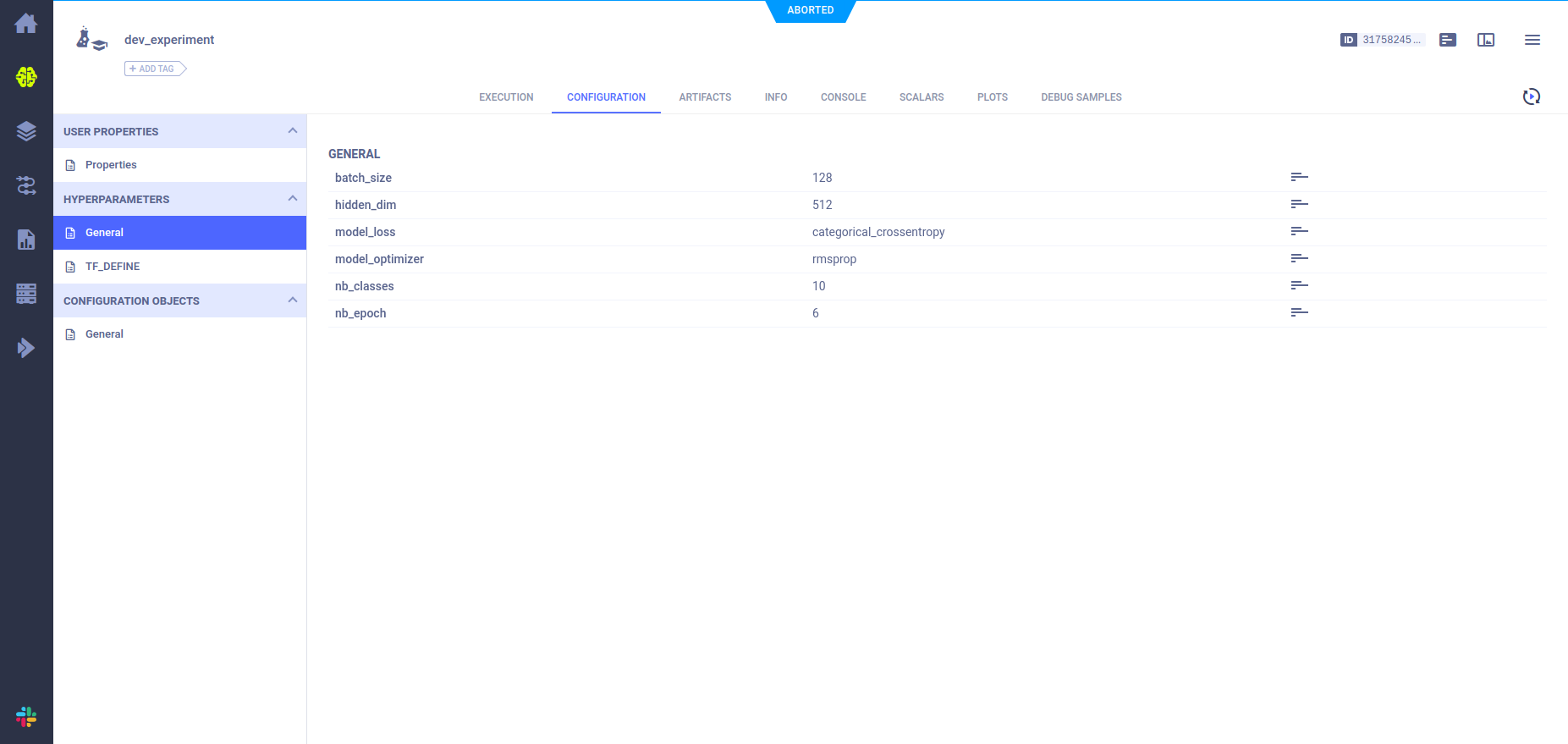

task_params = task.connect(task_params)ClearML can track the changes in the script that are modified anywhere in the code. For showcasing this feature, let us now modify the batch size from 64 to 128 and also add some more key-value pairs to the variable task_params:

# You can notice that, updating the task_params is traced and updated in ClearML UI

task_params['batch_size'] = 128

task_params['model_loss'] = 'categorical_crossentropy'

task_params['model_optimizer'] = 'rmsprop'Now, we load our MNIST dataset to a dictionary:

raw_data_dir = os.getcwd() + '/'

data_sources = {

"training_images": "train-images-idx3-ubyte.gz", # 60,000 training images.

"test_images": "t10k-images-idx3-ubyte.gz", # 10,000 test images.

"training_labels": "train-labels-idx1-ubyte.gz", # 60,000 training labels.

"test_labels": "t10k-labels-idx1-ubyte.gz", # 10,000 test labels.

}#import csvWe decompress the 4 files and create 4 ndarrays, saving them into a dictionary. Each original image is of size 28×28, and neural networks normally expect a 1D vector input; therefore, we also need to reshape the images by multiplying 28×28 (784).

mnist_dataset = {}

# Images

for key in ("training_images", "test_images"):

with gzip.open(os.path.join(raw_data_dir, data_sources[key]), "rb") as mnist_file:

mnist_dataset[key] = np.frombuffer(

mnist_file.read(), np.uint8, offset=16

).reshape(-1, 28 * 28)

# Labels

for key in ("training_labels", "test_labels"):

with gzip.open(os.path.join(raw_data_dir, data_sources[key]), "rb") as mnist_file:

mnist_dataset[key] = np.frombuffer(mnist_file.read(), np.uint8, offset=8)Now, we split the data into training and test set, using the standard notation of “x” for data and “y” for labels:

x_train, y_train, x_test, y_test = (

mnist_dataset["training_images"],

mnist_dataset["training_labels"],

mnist_dataset["test_images"],

mnist_dataset["test_labels"],

)Let us checkout out a sample image from the training data by plotting it using the matplotlib library

mnist_image = x_train[59999, :].reshape(28, 28)

plt.imshow(mnist_image)

# Display the image.

plt.show()Now, we process the data by converting the data type to “float32” and normalizing the image samples:

X_train = X_train.reshape(60000, 784)

X_test = X_test.reshape(10000, 784)

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

X_train /= 255.

X_test /= 255.

print(X_train.shape[0], 'train samples')

print(X_test.shape[0], 'test samples')We can see that there are 60000 training samples and 10000 testing samples. We also need to convert the labels to one hot encoded vector, where there are 10 classes, with each class corresponding to one digit:

y_train = np_utils.to_categorical(y_train, task_params['nb_classes'])

y_test = np_utils.to_categorical(y_test, task_params['nb_classes'])Now, we create a model using keras for training:

model = Sequential()

model.add(Dense(task_params['hidden_dim'], input_shape=(784,)))

model.add(Activation('relu'))

# model.add(Dropout(0.2))

model.add(Dense(task_params['hidden_dim']))

model.add(Activation('relu'))

# model.add(Dropout(0.2))

model.add(Dense(10))

model.add(Activation('softmax'))

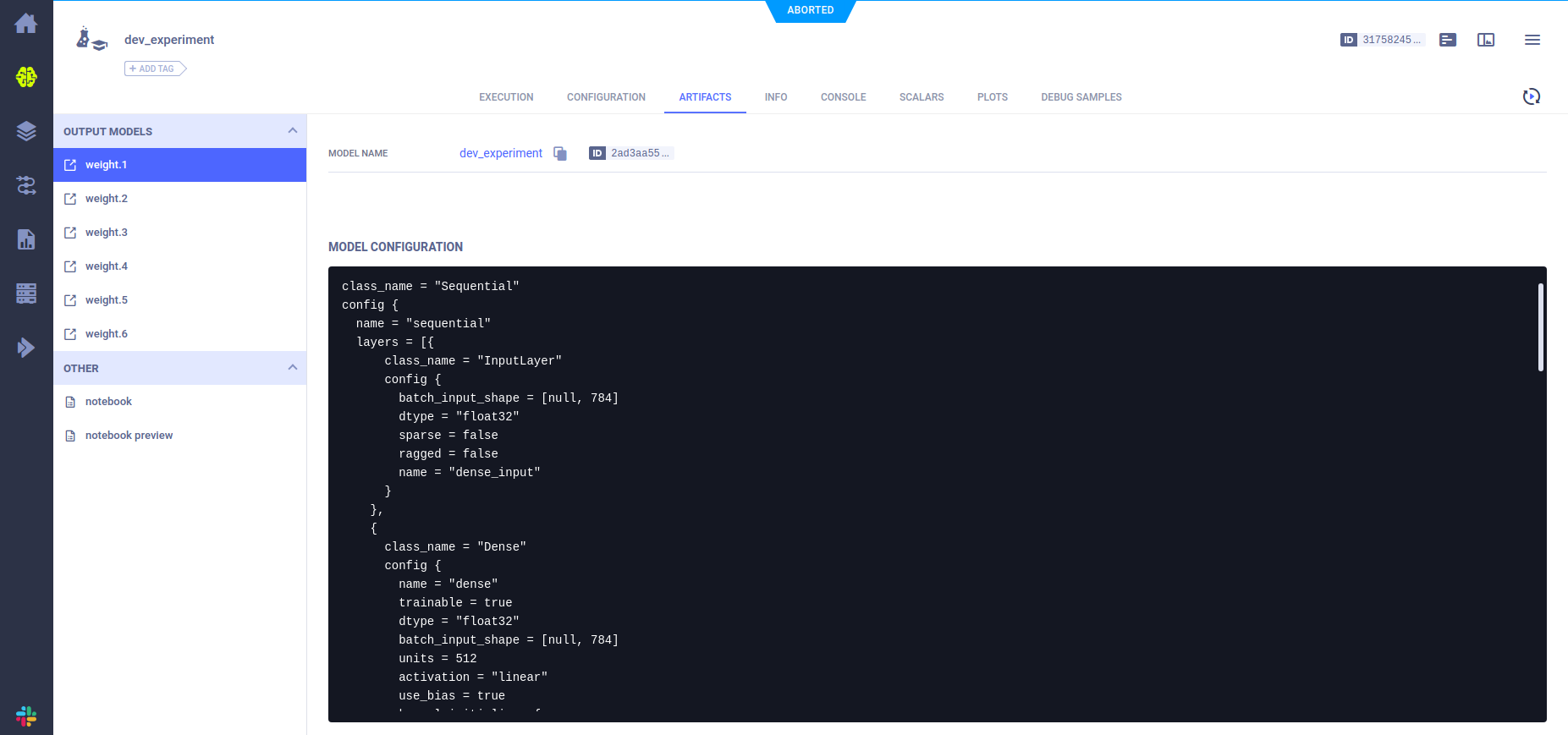

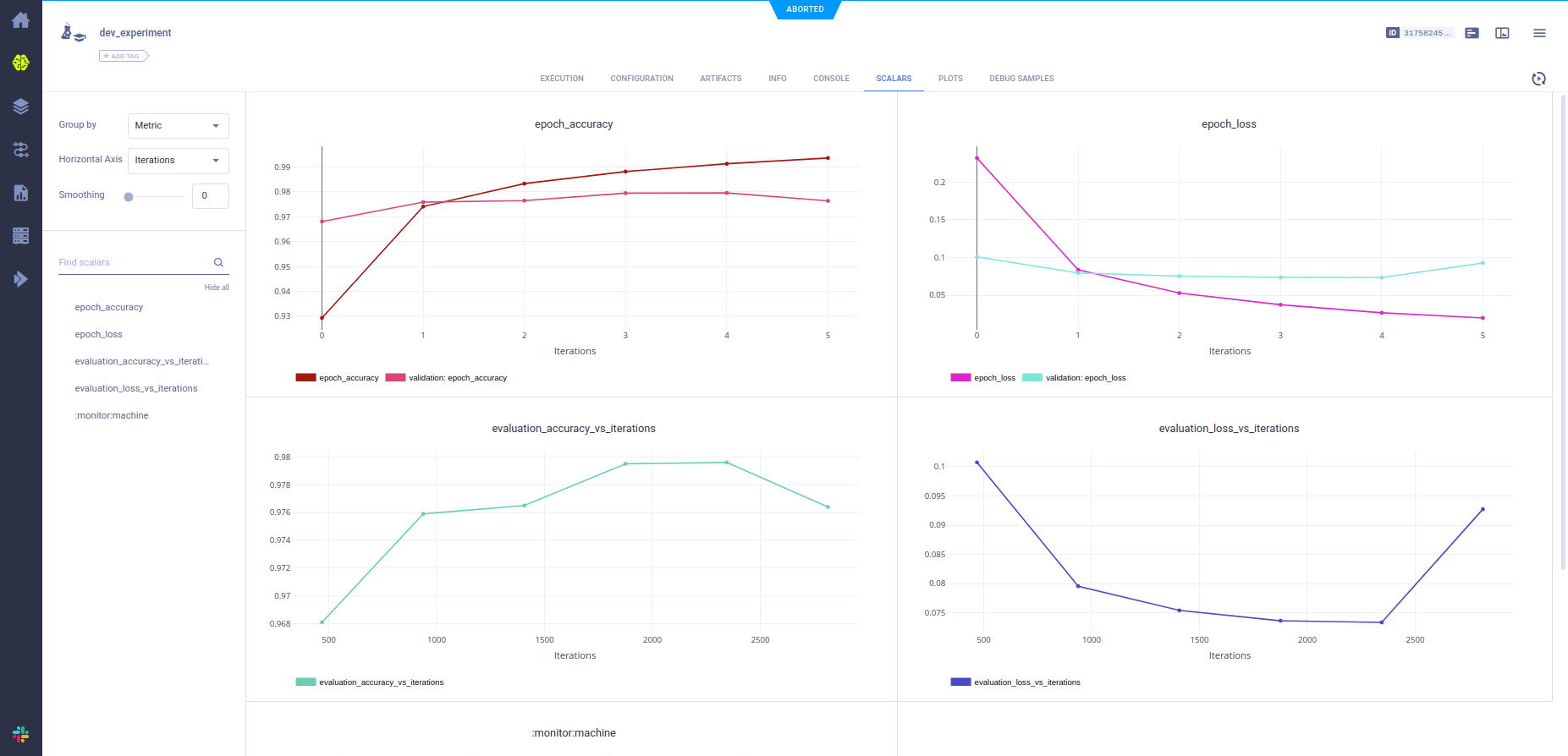

model.summary()It is a Sequential model having two Dense layers of 512 units of hidden layers, having ReLU activation. The last layer is a Dense layer with 10 output units with softmax activation to predict the probability of 10 classes. After initializing the model, we train the model using the training samples created earlier. We use ModelCheckpoint class to store model weights after each training epoch and TensorBoard for tracking different metrics. These callbacks are tracked by ClearML, which can be visualized in their web UI once the training completes.

model.compile(loss=task_params['model_loss'],

optimizer=task_params['model_optimizer'],

metrics=['accuracy'])

board = TensorBoard(

histogram_freq=1,

log_dir=os.path.join(

tempfile.gettempdir(),

'histogram_example'

)

)

model_store = ModelCheckpoint(

filepath=os.path.join(

tempfile.gettempdir(),

'weight.{epoch}.hdf5'

)

)

model.fit(

x_train, y_train,

batch_size=task_params['batch_size'],

epochs=task_params['nb_epoch'],

callbacks=[board, model_store],

verbose=1, validation_data=(x_test, y_test)

)After successfully training the model, we need to test it for accuracy. We use the testing data and labels to get the loss and accuracy of the trained model.

score = model.evaluate(x_test, y_test, verbose=0)

print('Test loss:', score[0])

print('Test accuracy:', score[1])After completely running the notebook cells, we successfully trained and tested the model. As a Data Scientist, you would run multiple experiments, training different models and hyperparameters on the same task. That is where ClearML reduces the burden of maintaining too many notebooks or scripts for every experiment we have run. While running a new experiment on the same task, you can change the experiment name for the same project so that the experiment gets logged on to ClearML under the same project as shown below:

task = Task.init(

project_name='mnist_digit_classification',

task_name='dev_experiment_1'

)ClearML UI Visualization

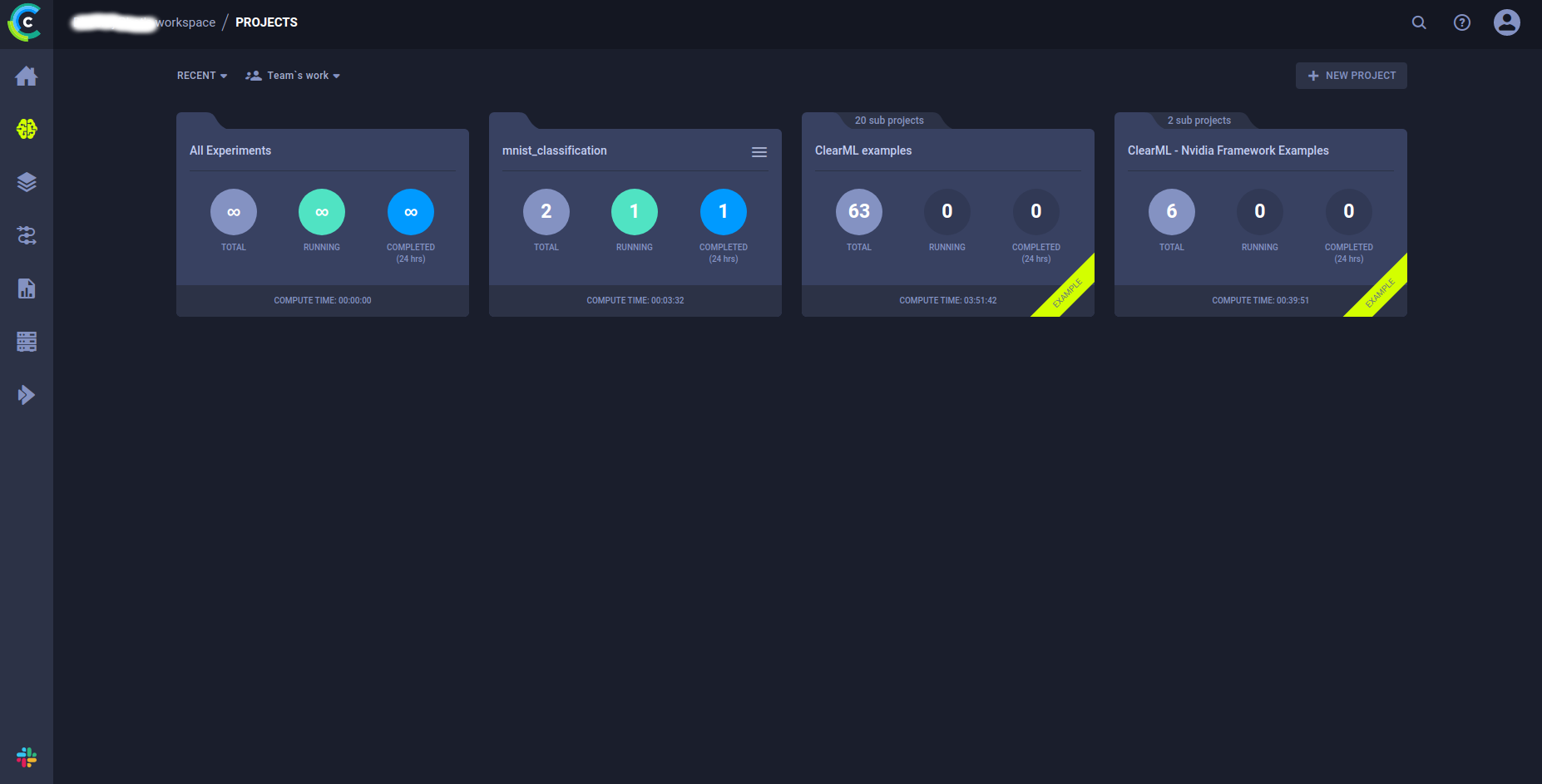

Dashboard

The dashboard page shows all the projects you can access and some examples to get you started with ClearML.

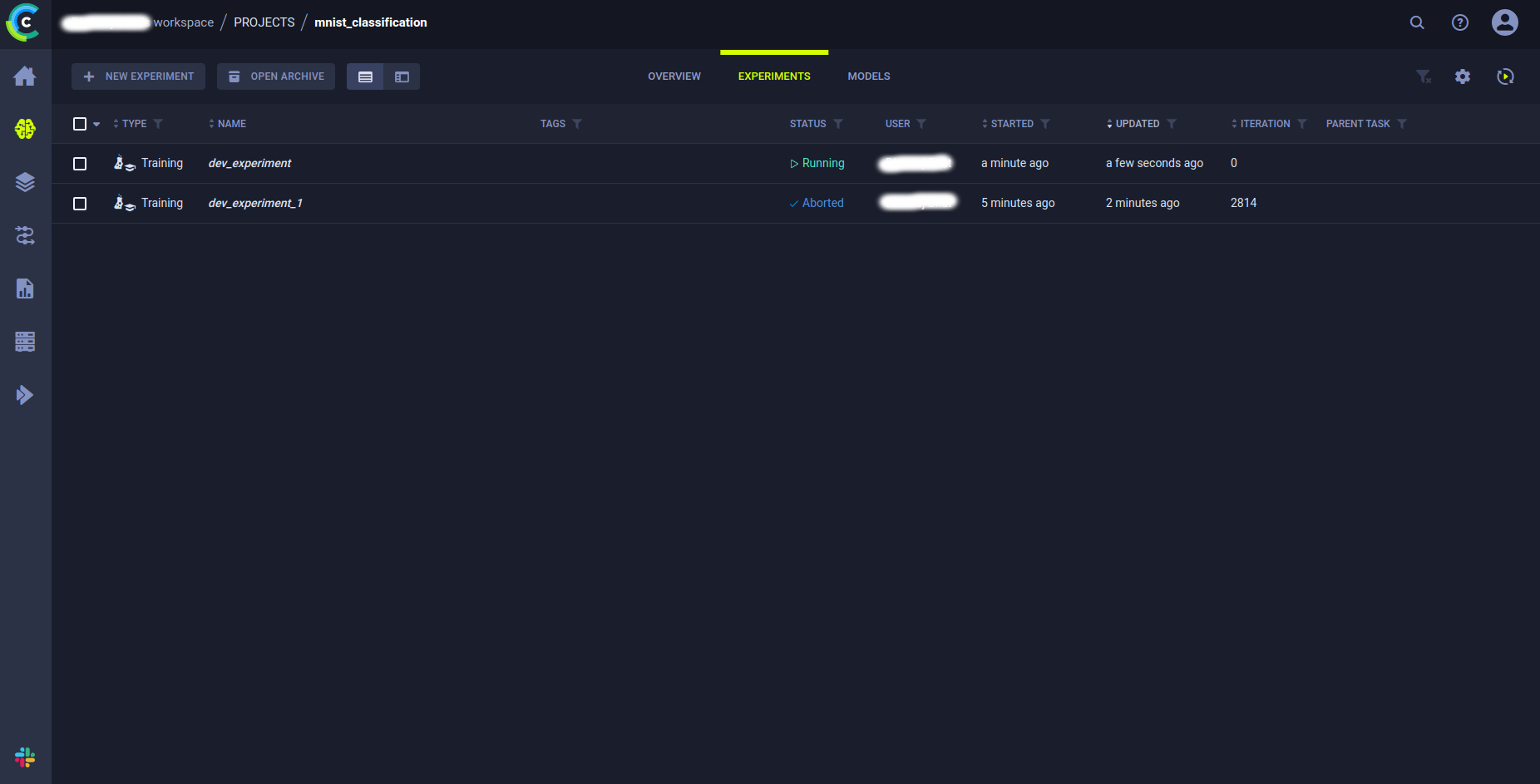

Projects

You can go to the project you have run in this tutorial (Project name: mnist_digit_classification) which shows all the experiments we have run in this task.

Experiments

You can view all the details of the experiments by right-clicking on any of the experiments, and you can view different tabs with all the experiment details.

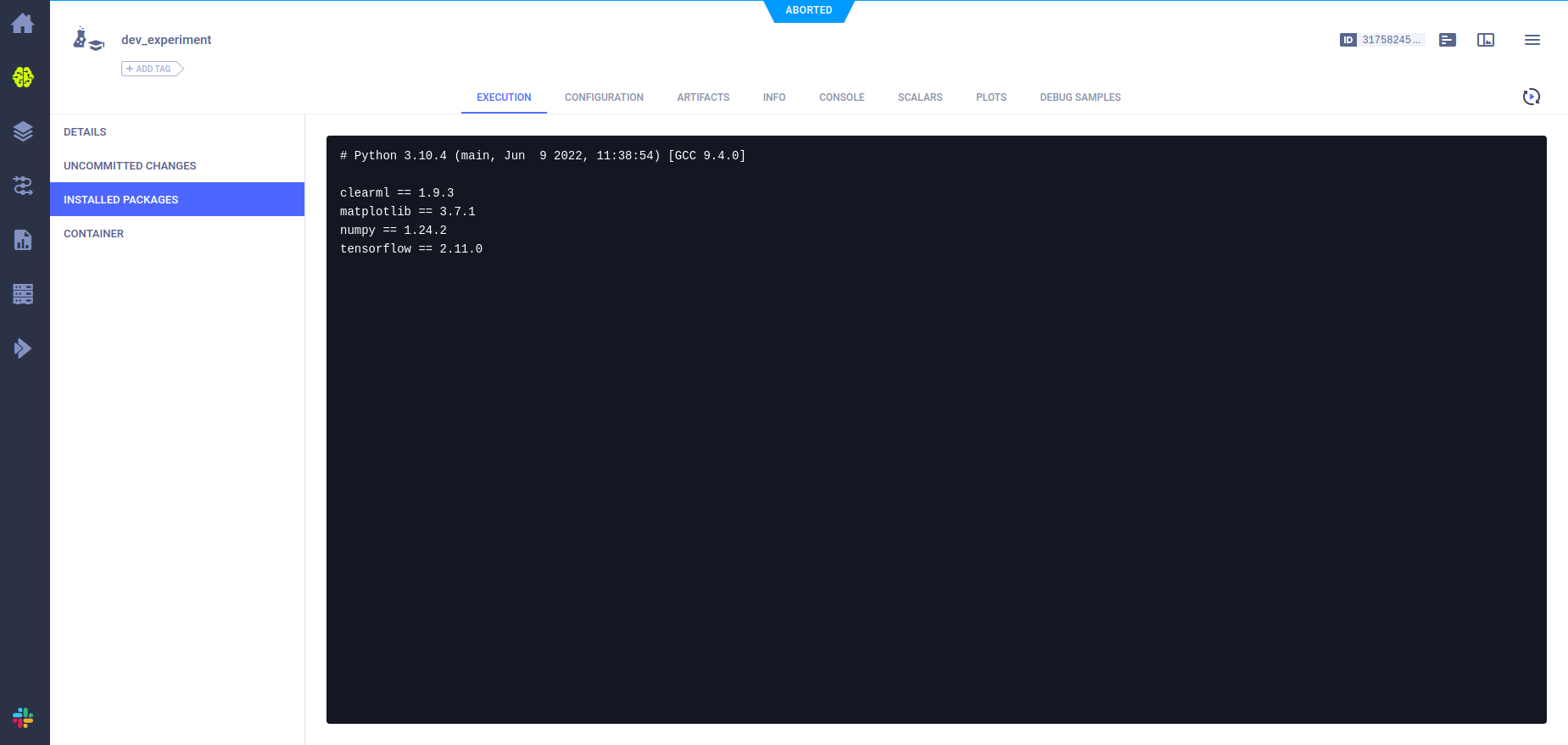

The project execution tab shows details like all the installed packages, container details (if any), uncommitted changes, etc.

The project configuration tab shows all the configurations we have added and the default configurations used while running the experiments.

The project artifacts tab shows all the model architecture and configuration details for every epoch run in the experiments.

The project scalars tab shows all the plots for training and validation data, like accuracy and loss during each training epoch.

This tutorial was meant to help you start with the ClearML platform and give you a basic understanding of the features and capabilities ClearML can offer for developing a robust development. After the development stage, you can also use ClearML for deploying and automating production workflow.

Combining Jupyter Notebook with ClearML

With the combination of Jupyter notebook with ClearML, you can do the following:

- Auto Logging: Since we use notebooks for experimentation, notebooks can become messy, and it might be painful to reproduce experiments. ClearML has an auto-logging feature that keeps all the executed code in a logging file to save time and makes code reproducible. It also keeps a .py file of the notebook for easy reproducibility.

- Versioning: As shown earlier in this tutorial, we can version our experiments for the task we are running and easily view all the details of the experiments that have been run using ClearML.

- Hyperparameter Optimization: ClearML hyperparameter optimization feature lets us automate the process of selecting the best ML model from different experiments.

- Live Tracking: ClearML agent helps in deploying the model and tracking the performance of the model live using the ClearML dashboard.

- Team Collaboration: It helps in collaborating with fellow team members to share your code, data, and experimentation with Role Based Access for enabling smooth operations.

Conclusion

ClearML also supports frameworks like PyTorch, TensorFlow, scikit-learn, XGBoost, Optuna, etc, to integrate with the latest tech stacks hassle-free. To summarize briefly, ClearML has many features that help you, from the development stage to the production of your ML workflow. You can check out my GitHub repository for the code used in this tutorial here. The key takeaways from this article are:

- Understanding how ClearML offers capabilities to perform MLOps at the production level

- Steps to install and use ClearML

- Walk-through tutorial using ClearML on MNIST classification task using jupyter notebook

- Walk-through of ClearML UI to showcase all the details of the MNIST experiment

- Tasks that can be performed using ClearML and jupyter notebook

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.